Quantum Computing Hardware in Silicon Valley 2026

The phrase quantum computing hardware in silicon valley 2026 is more than a topical headline; it signals a real inflection point where research results begin to collide with market requirements. Silicon Valley’s unique blend of university-led talent, venture capital momentum, and world-class hardware ecosystems has long been touted as an engine for quantum progress. In 2026, that engine is starting to turn more of its gears in public, testable, near-term ways. Notably, Google Quantum AI’s Willow chip—manufactured in Santa Barbara and integrating 105 qubits with record-level error characteristics—offers a concrete, lab-validated milestone that the broader SV hardware story now relies upon to push toward practical outcomes. This is not a distant dream; it is a pivot point with measurable consequences for who builds, who funds, and who finally deploys quantum-enhanced technologies. (blog.google)

My thesis is clear and intentionally provocative: Silicon Valley’s hardware ecosystem is increasingly the decisive lever for practical, near-term quantum advances, even as the field continues to chase fault-tolerant, scalable architectures. The valley’s advantage rests less on a single breakthrough and more on an integrated pipeline—chip design, advanced packaging, cryogenics, control electronics, software ecosystems, and customer co-development—that translates laboratory performance into market-ready capabilities. The Willow milestone demonstrates both the promise and the limits of current hardware, and it compels a cautious but confident forecast: SV will shape the hardware platform that underpins quantum applications in finance, chemistry, materials science, and optimization in the near term, even as the long-run goal of universal fault-tolerant quantum computing remains several years out. (blog.google)

Section 1: The Current State

The Silicon Valley hardware ecosystem today

Silicon Valley remains a magnet for quantum hardware activity, attracting a mix of deep technical startups, university partnerships, and corporate R&D labs. Google Quantum AI operates at the center of this ecosystem, leveraging its California-based hardware development to push the boundaries of superconducting qubits, coherence, and error suppression. Willow—the 105-qubit processor announced in late 2024 and detailed in early 2025—exemplifies a SV-driven hardware program that couples chip fabrication with sophisticated control and verification workflows. Manufactured in Santa Barbara, Willow’s architecture and measurements underscored a crucial point: error rates can be suppressed as systems scale, enabling more complex quantum circuits to run with meaningful fidelity. This is a foundational capability for SV’s broader hardware strategy and a signal to the market that SV is ready to demonstrate practical, near-term quantum operations. (blog.google)

Beyond Google, the Bay Area and broader SV harbor other hardware players that collectively sustain a hardware-centric ecosystem. PsiQuantum, based in Palo Alto, is pursuing silicon photonic quantum computing with a stated mission to deploy useful quantum machines; QC Ware operates as a quantum software and services outfit in Palo Alto that helps enterprises access hardware across vendors, underscoring the region’s emphasis on cross-vendor interoperability and cloud-to-quantum workflows. These entities illustrate a SV-centric strategy that emphasizes not only qubit physics but also the ancillary systems—control, readout, cryogenics, packaging, and developer tools—that determine real-world usability. PsiQuantum and QC Ware, among others, anchor a Valley-wide culture of hardware-software co-design and practical deployment planning. (psiquantum.com)

In parallel, the broader quantum hardware landscape includes significant non-SV players, including IBM, which maintains a robust hardware roadmap with ambitious multi-thousand-qubit targets and modular architectures designed to scale well beyond current chip counts. IBM’s public roadmaps articulate a path toward near-term quantum advantage by 2026 and a long-run objective of fault-tolerant systems with thousands of qubits, leveraging innovations like long-range couplers and modular quantum systems. While IBM’s headquarters are not in Silicon Valley, its SV engagement—through partnerships, ecosystems, and talent flow—continues to influence how hardware thinking migrates to practical deployments. (ibm.com)

In terms of proximity and hardware strategy, Intel’s SV presence adds another layer of SV relevance to the story. Recent reporting on advanced packaging technologies (such as EMIB-T) and the push to scale silicon-interconnect capabilities speaks to the Valley’s ongoing role in hardware integration and manufacturing innovations that will eventually support larger quantum systems. The SV context for quantum hardware is increasingly about how to connect many qubits, control lines, and cryogenic interfaces in manufacturable, scalable ways, a problem that SV-based actors are uniquely positioned to address given their established strength in device packaging, interconnects, and system-level design. (tomshardware.com)

Finally, the SV hardware story is enriched by a cadre of research institutions and tech press that document the region’s ongoing momentum. Stanford and other major research hubs are deeply involved in hardware likely to influence SV’s quantum trajectory, and industry watchers have begun to frame 2026 as a year in which “near-term practical quantum hardware” in the SV ecosystem is not just possible but actively shaping customer conversations and investment theses. The commentary from Stanford-affiliated sources and industry analysts emphasizes that the hardware clock for quantum computing is now running at a tempo where production-like systems and early applications can emerge within a few years rather than a decade. (setr.stanford.edu)

What the numbers tell us is a hybrid story: Willow’s 105-qubit milestone, with below-threshold error correction demonstrated in a lab setting, is a symbolic achievement that translates into practical leverage for SV-based teams aiming to build more capable devices and more credible demonstrations. Google’s own communications quantify fidelity metrics across its array: single-qubit gate fidelities around 99.97%, two-qubit entangling fidelities near 99.88%, and readout fidelity around 99.5%, all within fast gate times on the order of tens to hundreds of nanoseconds. Those numbers matter because they shape how quickly developers can prototype, test, and scale quantum circuits in real-world workflows. This is not theoretical performance; it is the working currency of silicon-valley-level hardware development. (blog.google)

Progress on qubits and error suppression

The Willow milestone is more than a headline; it is a data point that informs the broader SV trajectory toward more trustworthy and scalable quantum hardware. Google’s reporting on Willow emphasizes two key benchmarks: error suppression capable of enabling below-threshold quantum error correction and the ability to perform sampling of random circuits at a scale and speed unmatched by classical systems in representative tasks. The Willow narrative thus provides a proof of concept for the implicit SV claim: that a combination of scale, coherence, and control can yield meaningful improvements in quantum error regimes. The practical takeaway for the 2026 SV hardware landscape is that progress is measurable, repeatable, and integrated with software ecosystems that can exploit these improvements in near-term applications. And in that sense, Willow anchors a credible SV narrative about “hardware progress with real, near-term value.” (blog.google)

To understand why this matters in a market sense, it helps to compare Willow with contemporaneous SV and non-SV efforts. IBM’s hardware roadmaps remain the closest counterpoint to Google’s demonstration: IBM is pursuing a phased, modular approach to achieving fault-tolerant operation, including multi-thousand-qubit targets and the next generation of qubits and couplers that enable long-range connectivity. The company’s public materials describe a path toward 4,000+ qubits capable of fault-tolerant operation by the latter part of the decade, with a recognized emphasis on bridging the gap between noisy intermediate-scale quantum (NISQ) devices and scalable quantum computing. While the IBM roadmap is not SV-exclusive, its presence in the global conversation helps frame Willow and similar SV milestones as credible, commercially meaningful steps rather than isolated research curiosities. (ibm.com)

In addition to the Willow milestone, the SV hardware scene includes ongoing work on packaging, cryogenics, and interconnect technologies that are essential to scale. Intel’s public coverage of advanced packaging approaches and the EMIB family illustrates the broader industry push to connect many components across chips and substrates—an essential precursor to scaling quantum processors beyond current qubit counts. The fact that these packaging innovations are being actively demonstrated and discussed in 2026 underscores an SV advantage: the Valley houses a robust ecosystem for turning laboratory ideas into manufacturable, scalable hardware platforms. (tomshardware.com)

Subsection: Commercialization and performance benchmarks The SV hardware story is increasingly about how to translate performance metrics into commercial value. Google’s communications about Willow are not merely progress notes; they are a signal to developers and enterprise customers that SV can deliver verifiable capabilities that map to real-world tasks, such as quantum-assisted learning, optimization, and chemistry simulations. The early success of Willow in achieving low error rates and high gate fidelities provides a credible basis for cloud-access models, developer tools, and enterprise pilots that Silicon Valley firms are uniquely positioned to orchestrate. As the SV ecosystem matures, expect more cross-vendor collaborations and hybrid workflows that combine superconducting qubits, photonic components, and advanced control software to deliver practical benefits in the near term. (blog.google)

Counterarguments exist, of course. Some observers argue that the true economic value of quantum hardware will hinge on fault-tolerant architectures that can scale to thousands or millions of qubits with robust error correction. They argue that current hardware progress, impressive as Willow may be, represents a bridge rather than a destination. This is a fair critique and one that IBM’s ongoing roadmaps actively address. The SV response to this critique is not denial; it is a pragmatic expansion: invest in hardware readiness and co-design software with customers so that when fault-tolerant systems arrive, there is a ready-made, market-ready pathway to deployment. This is precisely the kind of ecosystem work that SV is renowned for: turning scientific breakthroughs into scalable platforms with practical use cases and customer traction. (ibm.com)

Section 2: Why I Disagree

Why I disagree with the notion that SV is merely chasing a distant dream

Argument 1: SV is not waiting for fault-tolerance to begin delivering value. The Willow milestone shows that practical, near-term quantum operations are achievable within a scalable hardware platform, which is the prerequisites for early adoption and real customer use cases. If the field had to wait for fault-tolerant universality, the market would stall. In contrast, SV is taking the measured step of delivering improved coherence, lower error rates, and verifiable breakthroughs that translate into usable sub-systems and pilot workloads today. Google’s own reporting of Willow demonstrates those capabilities in action, with explicit evidence of error suppression and accelerated sampling tasks. This is a compelling counterpoint to the narrative that “hardware progress is always a decade away.” (blog.google)

Argument 2: Packaging and interconnect innovations will accelerate scalability far sooner than some skeptics expect. The advance of packaging technologies (EMIB-T and related approaches) is not tangential to quantum hardware; it is core to the ability to attach more qubits to a system without prohibitive signal losses and thermal constraints. SV-based packaging progress is a critical enabler for larger qubit counts and more integrated quantum-classical control systems. If SV players can demonstrate scalable packaging in tandem with qubit improvements, the time to multi-thousand-qubit machines becomes less of a black box and more of a managed program with clear milestones. This is precisely the kind of operational insight investors and enterprise buyers crave. (tomshardware.com)

Argument 3: The SV ecosystem benefits from a deep pool of talent, capital, and cross-disciplinary collaboration. PsiQuantum’s photonic approach, Rigetti’s full-stack hardware-and-software model, and QC Ware’s cloud-based multi-vendor access collectively demonstrate that SV is cultivating not only hardware breakthroughs but also the ecosystems, standards, and go-to-market channels needed to realize practical use. The presence of Palo Alto–based players working in tandem with Bay Area research institutions and the venture community creates a feedback loop that accelerates iteration, reduces risk, and builds a market-ready pipeline of applications that can exploit the early hardware gains in Willow-like devices. This is not mere hype; it’s a deliberate strategy that aligns the hardware, software, and market sides of the equation. (psiquantum.com)

Argument 4: The SV position is reinforced by a broader industry rhythm that values co-design and customer-driven development. IBM’s 2026- and 2029-era targets reflect not just hardware milestones but a recognition that practical utility will emerge from integrated systems—software stacks, compilers, error mitigation techniques, and optimization workflows that are co-developed with customers. The SV response to this rhythm is to emphasize an end-to-end capability: from qubits and cryogenics to cloud access, tooling, and modeled business value. This end-to-end approach is what gives SV a credible edge in 2026 and beyond, turning hardware progress into measurable industry outcomes. (ibm.com)

Counterarguments to address

- Critics may point out that global competition remains intense, with significant activity in Europe and Asia as well as in the United States. They may argue that fault-tolerant quantum computing remains the critical bottleneck, and that practical, scalable machines will not arrive in earnest until the latter part of the decade. Those critiques have merit, but they should not obscure the fact that the SV hardware ecosystem is now actively delivering near-term capabilities that customers can attempt to adopt, pilot, and scale. The Willow milestone, together with IBM’s roadmaps and packaging innovations, demonstrates that near-term hardware progress is both real and market-relevant. The industry’s attention to co-design and customer-driven development further reduces risk for early adopters who want to test quantum workloads today, not in a hypothetical future. (blog.google)

Section 3: What This Means

Implications for strategy, policy, and practice

Implication 1: Enterprises should plan multi-year, co-designed pilots that pair SV hardware suppliers with domain experts. The Willow-style demonstrations show that targeted tasks—such as optimization problems, quantum simulation of chemical systems, and sampling tasks—can be accomplished with hardware that sits inside the SV ecosystem. The practical takeaway is to structure pilots around measurable outcomes, not theoretical capabilities. As SV hardware matures, these pilots can become larger, more diverse, and more closely aligned with enterprise needs. The presence of multi-vendor access platforms and cloud-based quantum services in SV supports this approach and lowers the entry barrier for enterprise teams. (blog.google)

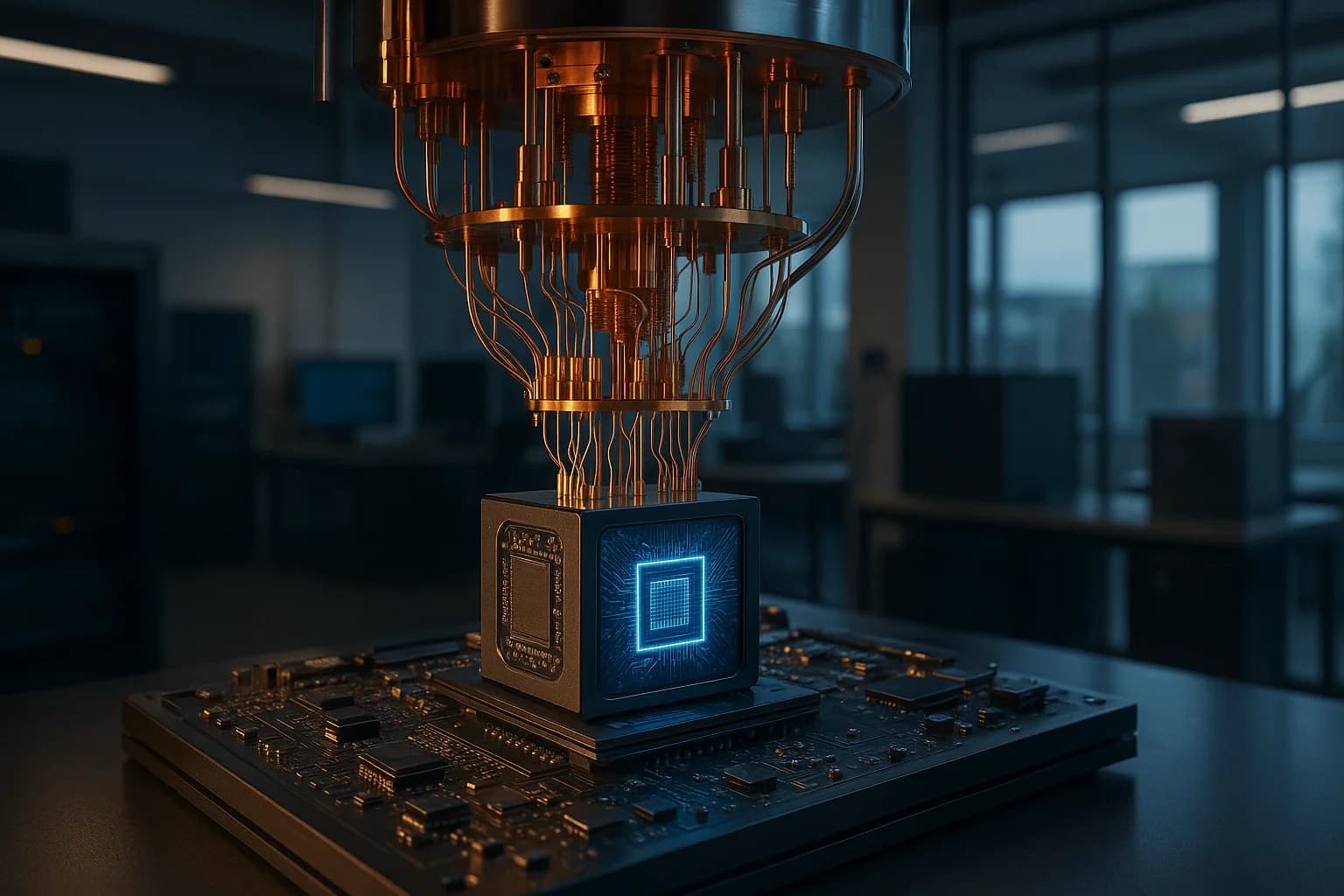

Photo by Laura Ockel on Unsplash

Implication 2: Policymakers and investors should recognize that hardware progress in SV is accelerating market readiness for quantum-enabled applications. Funding signals—such as rising interest in quantum computing across venture and corporate investment channels—are fueling both hardware development and the software, services, and tooling that will accelerate practical adoption. A 2025–2026 view of the market suggests that funding levels are rising in key geographies, with SV playing a central role due to its dense tech ecosystem. This dynamic matters for national strategy, technology-innovation policy, and the design of public-private partnerships that aim to translate lab breakthroughs into real-world value. (w.tracxn.com)

Implication 3: The SV approach suggests a constructive path for the rest of the industry: emphasize system-level integration, developer tooling, and interoperability. The Willow milestone shows that progress is not solely about adding more qubits; it is also about ensuring that qubits can be controlled, corrected, and measured reliably within scalable architectures. Enterprises should look for hardware partners in SV that offer robust tooling, cross-vendor compatibility, and realistic roadmaps for scaling, because those elements determine whether a organization can move from experimentation to production-ready workloads in a reasonable timeframe. The IBM and Google narratives, taken together, illustrate that hardware excellence must be matched with software and ecosystem readiness. (ibm.com)

Implication 4: The long-run trajectory remains fault-tolerant quantum computing as the ultimate objective, but 2026 is a year in which the pathway to that objective becomes clearer. The field’s long-term bets—scaling to thousands of qubits, achieving practical fault tolerance, and delivering universal quantum advantage—remain unresolved in the sense of a fixed date. Yet the 2026 SV momentum suggests that practical, near-term utility will arrive through optimized, specialized machines and computing environments that can be integrated into existing business workflows. This is an important distinction: the near-term market will be value-driven, while the long-term vision will continue to hinge on fault-tolerant, large-scale architectures. The SV ecosystem is positioning itself to win both timelines by focusing on end-to-end performance, reliability, and real-world use cases. (ibm.com)

Closing

In 2026, quantum computing hardware in silicon valley 2026 is not a ceremonial milestone; it is a practical inflection point. Silicon Valley’s hardware landscape has evolved from a collection of experimental labs into a coordinated, market-aware ecosystem that couples qubit physics with packaging, control, software, and enterprise deployment. Willow’s 105-qubit achievement, the progress toward scalable packaging, and the robust SV talent and capital infrastructure together create a compelling case that the valley will play a decisive role in delivering near-term quantum value. The road ahead will require continued investment in co-design, cross-vendor interoperability, and customer-led development, but the trajectory is unmistakably oriented toward turning quantum experiments into real-world capabilities. As stakeholders, researchers, and practitioners, we should prepare for a 2026–2027 window in which SV-authored advances translate into concrete pilots, clearer business cases, and tangible productivity gains across sectors.

The lessons from this moment are clear: do not treat quantum hardware as a distant dream; treat it as a structured program with measurable milestones, funded by a vibrant ecosystem that blends research, manufacturing, and market demand. If Silicon Valley remains faithful to that blend, 2026 will be remembered not as the year hardware finally proves its mettle, but as the year the Valley’s hardware pipeline began to reliably deliver practical, scalable quantum outcomes for a broad set of industries.

“Willow shows how to dramatically suppress errors as you scale,” Hartmut Neven observed in public coverage, underscoring a critical inflection point in which error correction becomes practical at larger qubit counts. This is not merely a novelty; it’s a signal that the valley’s hardware stack is beginning to reach a level of maturity where meaningful quantum tasks can be attempted in real-world contexts. The significance is not just the chip, but the ecosystem that makes the chip usable for real problems. (theguardian.com)

As we move forward, the Stanford Tech Review community should keep a data-driven view: map concrete, deployable workloads to specific SV hardware capabilities; track progress on packaging, qubit quality, and control electronics; and maintain a critical eye on roadmaps that connect performance milestones to practical product and market outcomes. The future of quantum computing hardware in silicon valley 2026 rests on this disciplined, collaborative approach—an approach that aligns engineering precision with market needs and policy support, ensuring that the valley’s promise translates into durable, real-world impact.